1. Introduction

Can artificial intelligence surpass human intelligence? This is a matter of general concern. In 2016, when AlphaGo defeated South Korean Go champion Lee Sedol, the concept of "deep learning" was repeatedly mentioned in media reports. The new version of AlphaGoZero makes full use of deep learning. Instead of training from the previous records of human players, AlphaGozero relies entirely on its own learning algorithm and learns to play by itself [1]. After a period of self-learning, it beat the version of AlphaGo that had beaten Lee Sedol and the version that had beaten Ke Jie outright.

What it can get in training is not only the rules and object information, but also the possible conditions of object appearance [2]. In other words, it has begun to "feel" and capture possibilities rather than just what is available. This kind of learning is a nonlinear, probabilistic, feedback adjustment, deepening and forming process. This is a learning process with some real time history [3].

Artificial Intelligence (Al) was created to give computers the understanding and logical thinking of human beings [4]. Machine learning is one of the fastest growing algorithms to implement artificial intelligence [5]. The idea of machine learning is to make machines automatically learn rules from large amounts of data and use the rules to make predictions about unknown data. In the algorithm of machine learning, deep learning refers to the algorithm that uses the structure of deep neural network to complete training and prediction [6,7].

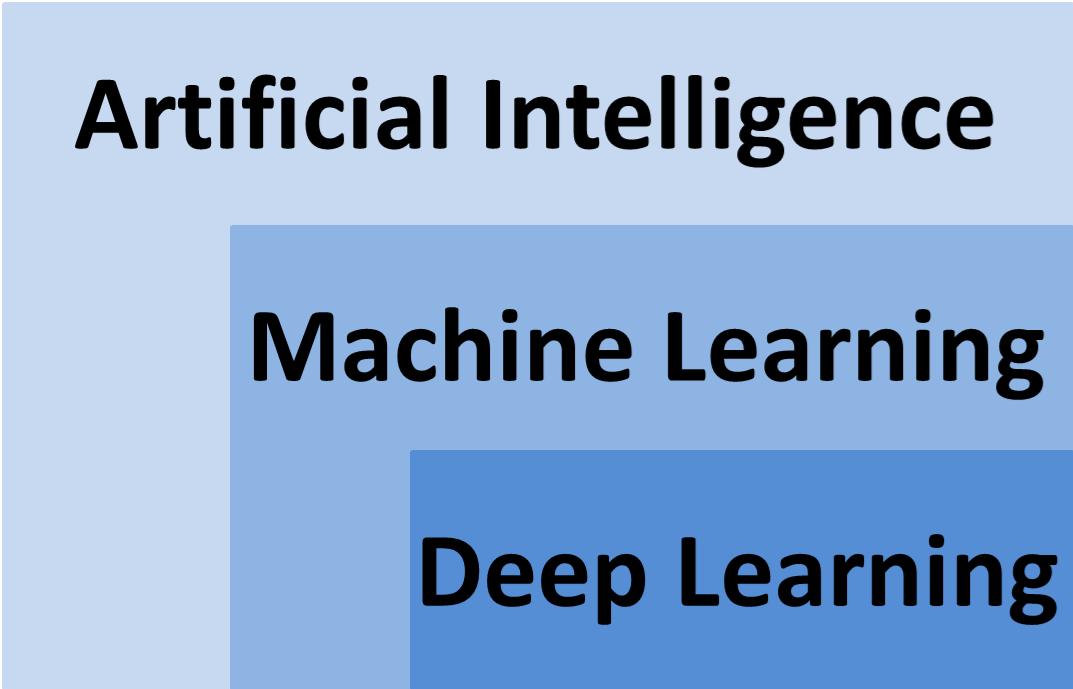

Machine learning is one of the methods of using artificial intelligence, and deep learning is one of the machine learning algorithms [8-10]. If artificial intelligence is compared to human brain, machine learning is the process of human cognition and learning through large amounts of data, and deep learning is a highly efficient algorithm in the learning process [11]. The relationship between artificial intelligence, machine learning and deep learning showed in Figure 1.

Figure 1. The relationship between artificial intelligence, machine learning and deep learning [12].

2. Related works in AI algorithm

2.1. Artificial intelligence

The idea of artificial intelligence first came from the Dartmouth Conference on computing in 1956. The idea was that machines would be able to think and react like human brains. Because of its difficulty and attraction, artificial intelligence has attracted numerous scientists and enthusiasts to research since its birth. The vehicle for AI could be robots, self-driving vehicles, or even a smart brain deployed in the cloud. By 2015, the widespread use of Gpus made parallel processing faster, more powerful, and cheaper. Cheaper storage can store big data (from plain text to images, maps, etc.) on a large scale [13]. It has created a need for data analytics, which is more commonly known as data science, leading to the development of machine learning as a way to achieve artificial intelligence.

According to the level of artificial intelligence implementation, we can further divide into three kinds of artificial intelligence: Artificial Narrow Intelligence (ANI): Intelligence that specializes in a specific task. Google Translate, for example, in language processing, is powerless to determine whether a picture is a cat or a dog. What's more, AI -- like automated spam sorting, self-driving cars and facial recognition on mobile phones -- is now mostly weak. When the concept of AI was born, it was expected that human-like complex intelligence would be achieved by building complex computers, also known as powerful AI, also known as General Artificial Intelligence (AGI) [14]. This intelligence requires machines to be as proficient as humans in listening, speaking, reading and writing. The technology is not yet at the level of general artificial intelligence, but a number of research institutions are working on it.

Artificial Super Intelligence (ASI): On top of strong artificial intelligence is super artificial intelligence, which is defined as intelligence that is smarter than the human brain in almost all fields, including innovation, social interaction, thinking, etc. Ai scientist Aaron Saenz once made an interesting analogy: Today's weak AI is like the amino acids of the early Earth, which could suddenly give rise to life. Hyper-ai will not remain in the imagination forever [15].

2.2. Machine learning

Machine learning can first be seen in Bayes' Theorem in 1783. Bayes' theorem is a form of machine learning that relies on historical data about how likely similar events are to occur. In 1997, IBM's DeepBlue chess computer program beat the world champion. Of course, the most exciting achievement was Lee Sedol's defeat of AlphaGo in 2016.

It occupies a central position as a branch of artificial intelligence. As the name suggests, the study of machine learning aims to enable computers to learn, simulate human learning behaviors, enhance learning abilities, and enable recognition and judgment. Machine learning uses algorithms to interpret large amounts of data, find patterns, complete learning, make decisions, and predict real events using post-learning thought patterns. This is also known as "training". Machine learning is an important way to realize artificial intelligence, and it is also the earliest developed artificial intelligence algorithm. Different from traditional algorithms based on rule design, the key of machine learning is to find rules from large amounts of data and automatically learn the parameters required by the algorithm.

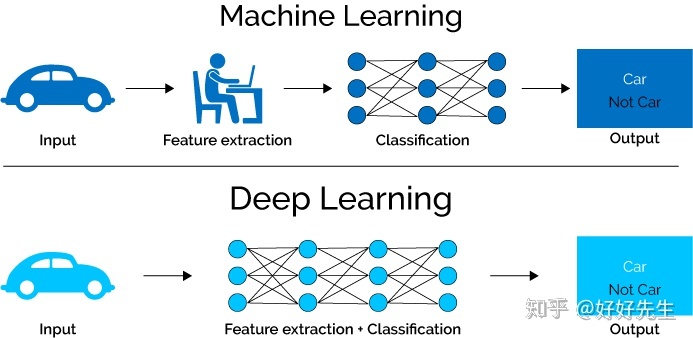

Figure 2. Processing structure of machine learning and deep learning [16].

2.3. Deep learning

Deep learning is the technology which Hinton et al. Proposed in 2006, and it is the technology which realizes machine learning. The depth learning is complicated, large scale and high level data calculation by constructing the depth artificial neural network. Deep learning can be regarded as one of the biggest breakthroughs in the field of machine learning. Deep learning follows the bionics generated by neuron and neural network research, and it imitates the way human neural networks transmit and receive signals, and learns the way of human thinking. Goals are achievable.

The earliest neural network can be traced back to the MCP (McCulloch and Pitts) artificial neural network in 1943, hoping to use simple weighted summation and activation functions to simulate the human neural process. On this basis, Perception model in 1958 used gradient descent algorithm to learn multi-dimensional training data, successfully realized the dichotomy problem, and also set off the first wave of deep learning. The figure below represents the simplest single-layer perceptron, with three inputs, which are simply added by weights and applied to an activation function to get the output y.

In 1986, Geoffrey Hinton, one of the "three masters" in the field of deep learning, creatively applied the nonlinear Sigmoid function to the multilayer perceptron, and used the Back propagation algorithm to study the model, which enabled the model to deal with nonlinear problems effectively. In 1998, Yann LeCun, the father of convolutional neural networks in the "Troika", invented LeNet model of convolutional neural networks, which can effectively solve the problem of image digital recognition and is regarded as the originator of convolutional neural networks.

In 2006, Hinton rebooted deep learning, suggesting that unsupervised initialization and supervised fine-tuning could alleviate locally optimal problems. This year is also known as the first year of deep learning. RELU activation, which was developed in 2011, effectively alleviates the phenomenon of light loss.

Deep learning exploded when AlexNet network "crushed" the second-place algorithm in the lmageNet image classification task in 2012 (see Figure 3). Since then, deep learning has been out of control. Excellent networks such as VGGNet and ResNe have been launched successively. In addition, deep learning has gradually shown its strength in classification, object detection, image segmentation and other fields, greatly exceeding the level of traditional algorithms. Of course, the development of deep learning cannot be separated from big data, GPU and models.

Figure 3. Deep learning algorithm in the ImageNet [17].

3. Machine learning --the approach to artificial intelligence

"Machine learning" is one of the main research fields of artificial intelligence. Its initial research motivation is to give computer systems the learning ability of humans, so as to achieve artificial intelligence. Professor T.Metchell, a fellow of the American Institute of Technology and one of the founders of the field of machine learning, wrote in his classic textbook Machine Learning. The classic definition of machine learning is "using experience to improve the performance of a computer system itself". Generally speaking, experiments are historical data (such as Internet data, scientific experiment data, etc.), systems are data models (such as decision trees, support vector machines, etc.), and performance is the ability of models to process new data (such as performance classification and prediction, etc.). Therefore, the basic task of machine learning is to analyze and model data intelligently.

With the development of information technology networking and low cost, people's social life, at an unprecedented speed in various fields such as scientific research data generation, large-scale collection and storage of data, how to achieve intelligent data processing, make full use of the knowledge and value contained in the data, has become the current academic and industry consensus, it is in this trend, machine learning has become common. As an intelligent data processing technology, it plays an increasingly important role and has received considerable attention.

Deep learning is a special type of machine learning that works with big data. But when the amount of data is relatively low, traditional machine learning methods may be more suitable. Deep learning allows for a wide range of applications, thereby expanding the scope of artificial intelligence, as we all know, we have not yet realized strong artificial intelligence, and early machine learning methods can not even realize weak artificial intelligence.

One must manually write classifiers and edge detection filters so that the program can tell where the target starts and ends. Write a shape checker to check if the detected object has 8 edges. Create a classifier that recognizes the character "st-o-p". Using these handwritten classifiers, an image can eventually be detected and an algorithm developed to determine if it is a stop sign.

4. Deep learning -- technology that enables machine learning

But when the amount of data is relatively low, traditional machine learning methods may be more suitable. Deep learning can enable learning machines to achieve widespread applications, thereby expanding the scope of artificial intelligence. Deep learning enables all machine access functions. Complete tasks in an overwhelming manner. Driverless cars, preventive health care, and even better advice from a nearby or upcoming movie.

The principle of neural networks is inspired by the physical structure of our brains, the interconnected neurons. But unlike neurons in the brain, which can be connected remotely to any neuron, artificial neural networks are independent layers with directions for connection and data transmission. But their contribution to "intelligence" is minimal.

Because machine learning is considered a form of artificial intelligence. Deep learning is often seen as a form of machine learning, and some people refer to it as a subset of machine learning. Machine learning uses simpler concepts such as predictive models. And deep learning uses artificial neural networks to simulate human thinking and learning styles. In biology high school. Neurons are the most important computational element in the human brain. I thought you said that every neural connection is like a little computer. Neural networks in the brain process input such as sight, sensation, smell and so on. Like machine learning, deep learning computer systems require us to input a lot of information, but this information is usually presented as large data sets. Because deep learning systems require artificial neural network then relies on the data to ask a series of true/false binary questions related to highly complex mathematical calculations, and categorizes the data based on the answers it receives.

5. Discussion

Using standard machine learning methods, we must manually select relevant features of the image to train the machine learning model, which then refers to these features when analyzing and classifying new objects.

Deep learning workflows can automatically extract relevant functions from images. Deep learning is a type of end-to-end learning that assigns tasks such as source data and classification to the network and completes them automatically. Another important difference is that deep learning algorithms handle data scaling and shallow learning data convergence. Surface learning refers to machine learning that brings platform-level performance to a specific performance level as users add more examples and training data to the network.

Selective machine learning allows you to understand the characteristics of training models in different classifiers and extracting the best results. In addition, machine learning provides the flexibility to choose combinations of methods, and you can use different classifiers and attributes to see which array best fits your data. Therefore, deep learning is usually more computationally intensive. Machine learning techniques are often easier to use.

5.1. Human intervention

For machine learning systems, pixel values, shapes, and while features of the application need to be manually identified and coded based on data type (such as direction), deep learning systems attempt to learn these features without additional human intervention. Take the process of face recognition. The program first learns to detect and recognize the edges and lines of a face. Next is the most important part of the face, and last is the whole face. There's a lot of data involved. Over time, the training of the program itself increases the probability of correct face recognition. Training is done through a neural network. No need to reprogramming. The training is done using neural networks, which do not need to be reprogrammed to work in a way similar to the human brain.

5.2. Hardware

Because of the complexity of computational complexity and computational complexity, the depth learning system requires more powerful hardware than simple machine learning systems. One of the important devices is a graphics processor (GPU), which is a machine learning software that can run on peripheral devices without high computing power [18].

5.3. Time

As far as we can tell, deep learning systems have many data sets, many parameters, and mathematical formulas that require a lot of training time, machine learning can take anywhere from a few seconds to a few hours, while deep learning can take anywhere from a few hours to a few weeks!

5.4. Methods

Algorithms used for machine learning usually analyze other parts of data and combine them to obtain results and solutions. The deep learning system can solve the whole problem at a time. For example, use the program to identify a specific object in the image (what it is, where it is, such as the license plate of the parking lot), two steps of machine learning must be completed. First the object is detected, then the object is identified. Deep learning programs simply input images. The program is trained to return the location in the identified object and image.

5.5. Applications

In the core applications of machine learning, predictive programs (for example, predicting stock prices or when and where the next hurricane will strike). These include procedures for developing evidence-based treatment plans for spam identifiers and medical patients. In addition to the aforementioned Netflix, music streaming services and facial recognition, another well-known app for deep learning is self-driving cars [19-21].

6. Conclusion

This study hope that through this study, which can have a basic understanding of machine learning and deep learning. The possibilities for machine learning and deep learning in the future are almost endless! The use of robots will inevitably increase. Not just in manufacturing, but in other improvements. Deep learning can help doctors predict or screen cancer earlier and save lives, so the medical industry will change. Deep learning can decompose tasks and realize all types of machine assistance, thus realizing many practical applications of machine learning. With the help of deep learning, artificial intelligence can reach the sci-fi state that human beings have long imagined.

In the eventual scenario of unsupervised learning and machine learning evolving on its own, artificial intelligence may seem almost insignificant in the world of machine learning. Over time, the distinction between AI and machine learning will become clearer, and the two will reinforce each other. The development of machine learning is largely driven by data science and related applications, and the direct manifestation seems to be the development and achievements of artificial intelligence, the development of artificial intelligence and data science also makes machine learning more scientific and effective. As technology advances, intelligence can exist in non-living entities, and it may eventually be "intelligence" (and therefore "intelligence") rather than people that first achieve interstellar travel. In the end it may be "intelligence" rather than people who travel through time, or even go back in time.

References

[1]. He Kaiming, Zhang Xiangyu, Ren Shaoqing, et al. Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition.[J]. IEEE transactions on pattern analysis and machine intelligence,2015(9).

[2]. LeCun Yann, Bengio Yoshua, Hinton Geoffrey. Deep learning[J]. Nature, 2015(7553).

[3]. Nishio Mizuho. Special Issue on Machine Learning/Deep Learning in Medical Image Processing[J]. Applied Sciences,2021,11(23).

[4]. Wang Yi, Sun Junhai. Design and Implementation of Virtual Reality Interactive Product Software Based on Artificial Intelligence Deep Learning Algorithm[J]. Advances in Multimedia, 2022.

[5]. Garland Jack, Hu Mindy, Kesha Kilak, Glenn Charley, Duffy Michael, Morrow Paul, Stables Simon, Ondruschka Benjamin, Da Broi Ugo, Tse Rexson. An overview of artificial intelligence/deep learning[J]. Pathology,2021,53(S1).

[6]. Yongzhang Zhou, Jun Wang, Renguang Zuo, Fan Xiao, Wenjie Shen, Shugong Wang. Machine Learning Deep Learning and Implementation Language in Geological Field[J]. Journal of Autonomous Intelligence,2021,4(1).

[7]. Kaluarachchi Tharindu, Reis Andrew, Nanayakkara Suranga. A Review of Recent Deep Learning Approaches in Human-Centered Machine Learning[J]. Sensors,2021,21(7).

[8]. Xiaolei Sun. Study on Speech Recognition Method of Artificial Intelligence Deep Learning[J]. Journal of Physics: Conference Series, 2021, 1754(1).

[9]. Xiaolei Sun. Study on Speech Recognition Method of Artificial Intelligence Deep Learning[J]. Journal of Physics: Conference Series, 2021, 1754(1).

[10]. Meng XiangHe. Combining artificial intelligence - deep learning with Hi-C data to predict the functional effects of noncoding variants.[J]. Bioinformatics (Oxford, England),2020,37(10).

[11]. Nencka Andrew S. Editorial for "Top 10 Reviewer Critiques of Radiology Artificial Intelligence (AI) Articles: Qualitative Thematic Analysis of Reviewer Critiques of Machine Learning / Deep Learning Manuscripts Submitted to JMRI".[J]. Journal of magnetic resonance imaging : JMRI,2020,52(1).

[12]. Misawa M,Kudo S,Mori Y,et al.Current status and future perspective on artificial intelligence for lower endoscopy[J].Digestive Endoscopy,2020.

[13]. Welliver Sara, Chong Jaron. Top 10 Reviewer Critiques of Radiology Artificial Intelligence (AI) Articles: Qualitative Thematic Analysis of Reviewer Critiques of Machine Learning/Deep Learning Manuscripts Submitted to JMRI.[J]. Journal of magnetic resonance imaging: JMRI,2020,52(1).

[14]. Gregory Jules. Promising Artificial Intelligence–Machine Learning–Deep Learning Algorithms in Ophthalmology:Erratum[J]. Asia-Pacific Journal of Ophthalmology,2019,8(5).

[15]. Yan Bicheng. A robust deep learning workflow to predict multiphase flow behavior during geological sequestration injection and Post-Injection periods[J]. Journal of Hydrology,2022,607.

[16]. Li Joshua J.X. Tumour segmentation with deep learning model trained on immunostain-augmented pixel-accurate labels–Application on whole slide images of breast carcinoma[J]. Pathology,2022,54(11).

[17]. Gupta R,Krishnam S P,Schaefer P W,et al.An East Coast Perspective on Artificial Intelligence and Machine Learning Part 2:Ischemic Stroke Imaging and Triage[J].Neuroimaging clinics of North America,2020(4):30.

[18]. Schuhmacher A.Big Techs and startups in pharmaceutical R&D–A 2020 perspective on artificial intelligence[J].Drug Discovery Today,2021.

[19]. Agarwal Piyush, Aghaee Mohammad, Tamer Melih, Budman Hector. A novel unsupervised approach for batch process monitoring using deep learning[J]. Computers and Chemical Engineering,2022,159.

[20]. Li Dongsheng. Frank. Towards automated extraction for terrestrial laser scanning data of building components based on panorama and deep learning[J]. Journal of Building Engineering,2022,50.

[21]. Pan Ning. A sensor data fusion algorithm based on suboptimal network powered deep learning[J]. Alexandria Engineering Journal,2022,61(9).

Cite this article

Yuan,H. (2023). Current perspective on artificial intelligence, machine learning and deep learning. Applied and Computational Engineering,19,116-122.

Data availability

The datasets used and/or analyzed during the current study will be available from the authors upon reasonable request.

Disclaimer/Publisher's Note

The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of EWA Publishing and/or the editor(s). EWA Publishing and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content.

About volume

Volume title: Proceedings of the 5th International Conference on Computing and Data Science

© 2024 by the author(s). Licensee EWA Publishing, Oxford, UK. This article is an open access article distributed under the terms and

conditions of the Creative Commons Attribution (CC BY) license. Authors who

publish this series agree to the following terms:

1. Authors retain copyright and grant the series right of first publication with the work simultaneously licensed under a Creative Commons

Attribution License that allows others to share the work with an acknowledgment of the work's authorship and initial publication in this

series.

2. Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the series's published

version of the work (e.g., post it to an institutional repository or publish it in a book), with an acknowledgment of its initial

publication in this series.

3. Authors are permitted and encouraged to post their work online (e.g., in institutional repositories or on their website) prior to and

during the submission process, as it can lead to productive exchanges, as well as earlier and greater citation of published work (See

Open access policy for details).

References

[1]. He Kaiming, Zhang Xiangyu, Ren Shaoqing, et al. Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition.[J]. IEEE transactions on pattern analysis and machine intelligence,2015(9).

[2]. LeCun Yann, Bengio Yoshua, Hinton Geoffrey. Deep learning[J]. Nature, 2015(7553).

[3]. Nishio Mizuho. Special Issue on Machine Learning/Deep Learning in Medical Image Processing[J]. Applied Sciences,2021,11(23).

[4]. Wang Yi, Sun Junhai. Design and Implementation of Virtual Reality Interactive Product Software Based on Artificial Intelligence Deep Learning Algorithm[J]. Advances in Multimedia, 2022.

[5]. Garland Jack, Hu Mindy, Kesha Kilak, Glenn Charley, Duffy Michael, Morrow Paul, Stables Simon, Ondruschka Benjamin, Da Broi Ugo, Tse Rexson. An overview of artificial intelligence/deep learning[J]. Pathology,2021,53(S1).

[6]. Yongzhang Zhou, Jun Wang, Renguang Zuo, Fan Xiao, Wenjie Shen, Shugong Wang. Machine Learning Deep Learning and Implementation Language in Geological Field[J]. Journal of Autonomous Intelligence,2021,4(1).

[7]. Kaluarachchi Tharindu, Reis Andrew, Nanayakkara Suranga. A Review of Recent Deep Learning Approaches in Human-Centered Machine Learning[J]. Sensors,2021,21(7).

[8]. Xiaolei Sun. Study on Speech Recognition Method of Artificial Intelligence Deep Learning[J]. Journal of Physics: Conference Series, 2021, 1754(1).

[9]. Xiaolei Sun. Study on Speech Recognition Method of Artificial Intelligence Deep Learning[J]. Journal of Physics: Conference Series, 2021, 1754(1).

[10]. Meng XiangHe. Combining artificial intelligence - deep learning with Hi-C data to predict the functional effects of noncoding variants.[J]. Bioinformatics (Oxford, England),2020,37(10).

[11]. Nencka Andrew S. Editorial for "Top 10 Reviewer Critiques of Radiology Artificial Intelligence (AI) Articles: Qualitative Thematic Analysis of Reviewer Critiques of Machine Learning / Deep Learning Manuscripts Submitted to JMRI".[J]. Journal of magnetic resonance imaging : JMRI,2020,52(1).

[12]. Misawa M,Kudo S,Mori Y,et al.Current status and future perspective on artificial intelligence for lower endoscopy[J].Digestive Endoscopy,2020.

[13]. Welliver Sara, Chong Jaron. Top 10 Reviewer Critiques of Radiology Artificial Intelligence (AI) Articles: Qualitative Thematic Analysis of Reviewer Critiques of Machine Learning/Deep Learning Manuscripts Submitted to JMRI.[J]. Journal of magnetic resonance imaging: JMRI,2020,52(1).

[14]. Gregory Jules. Promising Artificial Intelligence–Machine Learning–Deep Learning Algorithms in Ophthalmology:Erratum[J]. Asia-Pacific Journal of Ophthalmology,2019,8(5).

[15]. Yan Bicheng. A robust deep learning workflow to predict multiphase flow behavior during geological sequestration injection and Post-Injection periods[J]. Journal of Hydrology,2022,607.

[16]. Li Joshua J.X. Tumour segmentation with deep learning model trained on immunostain-augmented pixel-accurate labels–Application on whole slide images of breast carcinoma[J]. Pathology,2022,54(11).

[17]. Gupta R,Krishnam S P,Schaefer P W,et al.An East Coast Perspective on Artificial Intelligence and Machine Learning Part 2:Ischemic Stroke Imaging and Triage[J].Neuroimaging clinics of North America,2020(4):30.

[18]. Schuhmacher A.Big Techs and startups in pharmaceutical R&D–A 2020 perspective on artificial intelligence[J].Drug Discovery Today,2021.

[19]. Agarwal Piyush, Aghaee Mohammad, Tamer Melih, Budman Hector. A novel unsupervised approach for batch process monitoring using deep learning[J]. Computers and Chemical Engineering,2022,159.

[20]. Li Dongsheng. Frank. Towards automated extraction for terrestrial laser scanning data of building components based on panorama and deep learning[J]. Journal of Building Engineering,2022,50.

[21]. Pan Ning. A sensor data fusion algorithm based on suboptimal network powered deep learning[J]. Alexandria Engineering Journal,2022,61(9).