1. Introduction

Earthquakes are among the most destructing natural disaster, often resulting in complex and dangerous rescue environment. The collapse of buildings creates massive debris, while aftershocks and secondary disasters such as fire and electric leakage pose ongoing threats to resucers. In such conditions, traditional manual methods for detecting vital signs gradually exposed many problems. These methods are not only inefficient but also highly constrained by the environment, often failing to detect trapped people in time and accurately, which compromises rescue effectiveness [1]. In response, life detection technologies based on robitc systems have chieved certain results. Portable devices designed by Beijing Institute of Technology can monitor the vital signs of disaster victims through robots and transmit the data to rescue command centers. However, these system still face critical challenges: limited detection range in large-scale disaster areas, susceptibility to environmental interference (e.g., debris, poor weather), Inability to capture comprehensive physiological indicators, and strong reliance on hardware—any malfunction can severely delay rescue efforts.

This study adopts a multi-dimensional research methodology. It systematically reviews domestic and international literature to analyze theoretical frameworks and technical bottlenecks. In addition, classic cases such as the Wenchuan earthquake are examined to assess the field performance of current technologies. Based on this, this research explores the applicability and optimization strategy of multi-sensor fusion technology in complex post-disaster environment to enhance the rescue robot's ability to detect and locate vital signs and promote the development of the technical system in the field of disaster rescue in an intelligent and efficient direction [2].

2. Current situation of rescue after earthquake and research status of vital signs detection technology

Earthquake disaster relief field presents significant complexity and high-risk characteristics. Debris accumulation from collapsed buildings not only impedes the movement of rescue workers but may also pose a risk of secondary injury to trapped people. The uncertainty of aftershock activity makes the rescue site constantly under the threat of structural instability, which may cause new collapse accidents at any time. At the same time, secondary disasters such as fire, flood and leakage further aggravate the danger and uncontrollable nature of rescue environment. Severe damage to infrastructure, such as communications and transportation, creates huge obstacles to the transportation of relief supplies and information transmission, leading to difficulties in organizing and coordinating, increasing the time cost and implementation difficulty of rescue response [3]. Facing this complex environments, traditional earthquake rescue models have obvious limitations in vital signs detection. Rescuers need to manually operate detection equipment in high-risk environments, an approach that has significant efficiency bottlenecks. In large-scale debris search and rescue scenarios, manual detection not only consumes a lot of time and human resources but also has a high risk of missed detection. In addition, environmental factors can cause multiple interference to manual detection. Noise interference, dust pollution and insufficient light in ruins easily lead to misjudgment or failure of detection equipment, thus reducing the accuracy and reliability of detection results. When exposed to hazardous working conditions for a long time, the strength and concentration of rescue personnel will rapidly decline, making it difficult to maintain the high quality and continuity of detection work [4].

In recent years, in addition to the portable life detection device developed by Beijing Institute of Technology, there are some similar technologies and devices that have been developed. Some robots are equipped with advanced sensors, such as thermal imagers, sound sensors, etc., which can improve the efficiency and accuracy of vital signs detection to a certain extent [5]. These technologies and equipment have played a certain role in the actual rescue, providing new means and ideas for rescue work [6]. However, there are still obvious technical bottlenecks. First, the effective detection distance of most equipment is limited, difficult to achieve wide-range and deep-level effective coverage at the disaster site. Secondly, there are a lot of physical obstructions and electromagnetic interference in complex environments, which will seriously weaken the transmission quality of signals and lead to significant reduction of detection accuracy. Thirdly, most of the existing equipment can only obtain the basic vital sign information of the trapped person, lacking the accurate monitoring ability of key physiological parameters such as heart rate, blood pressure and respiratory rate [7].

3. Problems faced by vital signs detection technology of rescue robot after earthquake

In the rescue robot vital sign detection system, many kinds of sensors work together, but different types of sensors may interfere with each other when they work. When radar sensors transmit and receive electromagnetic signals, electromagnetic interference may occur to acoustic signal sensors, making errors in sound signals collected by acoustic signal sensors. Similarly, the work of infrared imaging sensors may also be affected by the light or heat emitted by other sensors, resulting in reduced infrared image quality and affecting the detection and positioning accuracy of trapped people [8].

Current vital signs detection algorithms have technical defects in adapting to complex environments. Noise and interference signals are ubiquitous in the post-earthquake ruins scene, which seriously pollute the data collected by sensors and increase the complexity of data processing. When dealing with such complex data, the feature extraction ability and information recognition efficiency of traditional algorithms are difficult to meet the requirements of real-time and accuracy. Take acoustic signal processing for example. When the background noise intensity is large, the algorithm easily misjudges the signal source and mistakenly identifies the ambient noise as the trapped person's life signal. In addition, the existing algorithms expose the bottleneck of computational efficiency in the process of large data processing, and it is difficult to meet the real-time detection requirements in emergency rescue scenarios. Algorithm optimization and technological innovation are urgently needed [9].

Post-earthquake debris environment is characterized by high complexity and uncertainty. Irregular piles of debris from collapsed buildings interweave with debris to create rugged terrain. This not only poses a severe challenge to the maneuverability of the rescue robot, but also seriously interferes with the effective detection of sensors. And due to the reflection and scattering phenomena caused by complex medium, the detection accuracy of radar sensor will decrease significantly. Severe weather conditions further aggravate the complexity of the detection environment and significantly improve the technical threshold for environmental adaptability of rescue robots [10].

4. Multi-sensor fusion technology scheme and application

4.1. Principle of multisensory fusion technology

Multi-sensor fusion technology integrates heterogeneous sensors such as radar, infrared imaging and acoustic signals to build a collaborative sensing system, giving full play to the performance advantages of each sensor to achieve multi-dimensional and accurate detection of targets [11]. Based on electromagnetic wave reflection principle, radar sensor uses Doppler effect and micro-motion feature extraction technology to quickly detect target motion state under non-contact condition and effectively identify long-distance life signs. Infrared imaging sensors capture infrared radiation signals unique to human body based on the correspondence relationship between infrared radiation and temperature of objects and have excellent target recognition ability in weak light or partial occlusion environment. The acoustic signal sensor collects environmental acoustic signals, applies Fourier transform, wavelet denoising and other signal processing algorithms, and combines filtering technology to accurately capture the weak sounds emitted by trapped people, thus realizing effective identification of vital signs.

4.2. Application of sensors in vital signs detection

4.2.1. Radar sensor

Radar sensors are mainly used to detect the movement state and distance information of targets in vital signs detection. By analyzing the Doppler frequency shift and micromotion frequency shift data, we can judge whether the target has life activity, and its approximate position and movement direction. When the radar sensor detects a weak periodic micromotion frequency shift signal, it may be caused by the breathing or heartbeat of the trapped person, thus determining the presence of vital signs [12]. The following is an example of radar detection based on Doppler effect, and its specific calculation formula is introduced [13]:

(1) Doppler frequency shift calculation formula: When the human body produces micromotion due to heartbeat and respiration, it will cause Doppler frequency shift

Where

(2) From Doppler frequency shift to micromotion displacement calculation: By integrating the Doppler frequency shift signal, the displacement information of human body micromotion can be obtained. If the Doppler shift

This is because Doppler shift is related to velocity, and the integral of velocity over time is displacement. In practical applications, digital signal processing methods are usually used to accumulate and approximate integral discrete Doppler frequency shift data.

(3) Heartbeat and respiratory frequency extraction: After obtaining the micro-motion displacement signal of human body, spectrum analysis is the key technical means to extract heartbeat and respiratory frequency, among which Fourier transform (FFT) is a commonly used algorithm. For a micro-motion displacement signal x(n) containing N sampling points (n= 1, 2,…, N-1 is the number of sampling points), a frequency domain sequence X(k), k= 0, 1,…, N-1 is obtained after FFT transformation, and the frequency corresponding to the spectrum peak is the main frequency component of the micro-motion signal. According to the physiological characteristics of the human body, the heartbeat frequency is usually distributed in the range of 0.8 - 3Hz, and the respiratory frequency is concentrated in the range of 0.1 - 0.5 Hz. Quantitative analysis of heart rate and respiratory rate can be achieved by identifying peaks in the corresponding frequency intervals in the spectrum. For example, if the spectral analysis results show a significant peak at 1.2 Hz, it can be determined as a characteristic of heart rate frequency. A peak of 0.2 Hz may correspond to respiratory frequency information.

4.2.2. Infrared imaging sensor

Infrared imaging sensor based on the infrared radiation characteristics of objects, in complex environments to demonstrate efficient human target recognition capabilities. It captures the temperature gradient difference between the human body and the environment, converts infrared radiation energy into visual images, and then accurately locates the spatial position of the trapped person and presents its contour characteristics [15]. The sensor's detection advantage is more prominent in the ruins with little light, which can significantly improve the search and rescue efficiency of trapped people and provide vital support for rescue operations.

According to the theory of blackbody radiation, any object above absolute zero (-273.15℃) emits infrared radiation continuously. The human body, due to its normal body temperature, becomes a stable source of infrared radiation. Infrared imaging sensor takes infrared detector as its core component and realizes target detection by sensing infrared radiation intensity. In the weak light environment, although the lack of visible light signals, but the infrared radiation characteristics of the human body is still significant. Sensors can detect human targets by capturing this radiation. In addition, infrared light has lower attenuation characteristics in smoke media than visible light, so it can still maintain effective detection ability in smoke-filled disaster scenes.

According to Planck's law of radiation, there is a significant functional correlation between the infrared radiation intensity of an object and its thermodynamic temperature. The formula is:

Where

4.2.3. Acoustic signal sensors

The acoustic signal sensor is based on the principle of environmental acoustic signal acquisition and realizes the detection of vital signs. When the trapped person makes a sound such as shouting or knocking, the sensor captures the corresponding sound signal and suppresses the interference of environmental noise through signal processing technology to extract effective vital sign information. By analyzing the frequency component, intensity distribution, duration and other characteristic parameters of the acoustic signal, the physiological state of the trapped person can be evaluated, the position of the trapped person can be determined, and the key basis for rescue decision can be provided. The following will be elaborated from two aspects of theoretical mechanism and mathematical model.

Sound signal collection: The sound signal sensor generally selects the microphone as the sound collection element. Microphones work based on piezoelectric effect, capacitance change or electromagnetic induction. As for the ordinary condenser microphone, it consists of a diaphragm and a fixed electrode. When sound waves strike the diaphragm, the diaphragm vibrates, causing a change in the distance between the diaphragm and the fixed electrode, resulting in a change in capacitance. This change in capacitance is converted into an electrical signal, thereby achieving a conversion from an acoustic signal to an electrical signal [17]. The propagation of sound can be described:

Where

Drying method and related treatment [18]:

(1) Denoising Based on Fourier Transform: Fourier Transform (FT) is a mathematical tool for converting time-domain signals into frequency-domain signals with the formula:

Where

(2) Wavelet denoising: Wavelet transform is another common denoising method, which can analyze signals at different resolutions. The wavelet transform formula is:

Where

Filter assisted vital signs judgment: Commonly used filtering methods include low-pass filtering, band-pass filtering, etc. Low-pass filters can pass low-frequency signals and suppress high-frequency signals, and their transfer functions can generally be expressed as:

Where

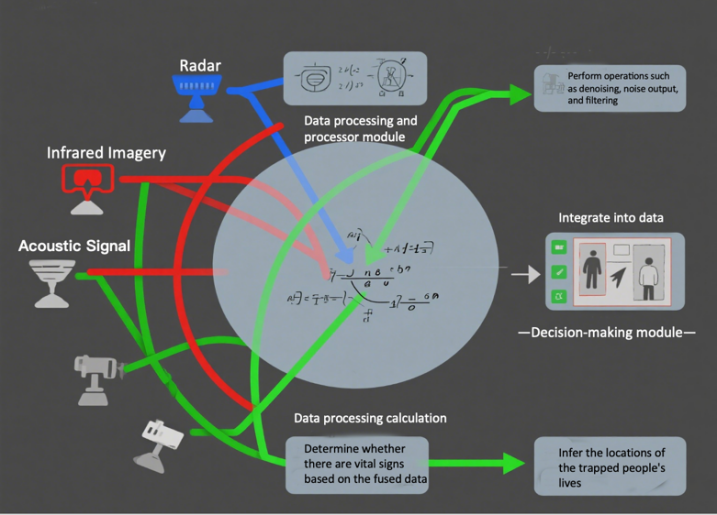

4.3. Flow chart of multi-sensor fusion technology

Multi-sensor fusion technology system consists of five core links: data acquisition, preprocessing, algorithm fusion, decision analysis and result visualization. As shown in Figure 1, heterogeneous sensors such as radar, infrared imaging, and acoustic signals (such as blue for radar data, red for infrared data, and green for acoustic data) complete data collection in corresponding modes respectively. The obtained data is immediately transmitted to the preprocessing module. Through noise elimination and filtering, noise interference is eliminated, and data quality and reliability are improved. The preprocessed data enters the processing stage of fusion algorithm. Through Kalman filter, Bayesian inference or deep learning fusion algorithm, multi-source heterogeneous data are fused at feature level or decision level to obtain more accurate and comprehensive vital signs information. The fused data is input to the decision and judgment module. According to the preset rules and models, the existence of vital signs and the spatial position of trapped people are discriminated, and the results are output to the visual interface to provide intuitive decision-making basis for rescuers.

4.4. Analysis of fusion algorithm

In multi-sensor fusion technology, fusion algorithm module is the core part to realize efficient fusion of different sensor data. Common algorithms include Kalman filter, Bayesian fusion and fusion algorithm based on deep learning, which realize data fusion based on different principles and formulas.

4.4.1. Kalman filter algorithm

Kalman filter is a common recursive filtering algorithm, suitable for data layer fusion, which continuously optimizes the estimation of system state through two steps of prediction and update [21]. Assume that the equation of state for the system is:

The observation equation is:

Where

Predicting the state at the current moment: according to the state estimation value at the previous moment, wherein the formula is:

Covariance of predicted state:

Where

Updating step: correcting the predicted state by using the observed value at the current time to obtain a more accurate state estimation value. Kalman gain

The updated state estimate is

The updated covariance is

Where

4.4.2. Bayesian fusion algorithm

Bayesian fusion is based on Bayesian theorem and widely used in decision fusion. Bayes theorem is formulated as:

In multi-sensor fusion, A represents different target states (such as the presence or absence of vital signs, the location of trapped people, etc.), and B represents the observations of individual sensors. If multiple sensors

Where

4.4.3. Fusion algorithm based on deep learning

Deep learning models can automatically learn features from data and perform well in feature layer fusion. Take CNN for example. It processes sensor data through convolutional layers, pooling layers, and fully connected layers [23]. Assuming that the input multi-sensor data is

Where

5. Advantages and application prospect of multisensory fusion technology

Multi-sensor fusion technology significantly improves the efficiency of vital signs detection and localization of rescue robots by integrating the performance advantages of heterogeneous sensors. The technology combines the remote detection capability of radar sensor, the thermal radiation recognition characteristic of infrared imaging sensor and the sound capture function of acoustic signal sensor, so that the rescue robot can more efficiently and accurately complete the vital sign detection and spatial positioning tasks of trapped people in complex earthquake ruins environment.

In the practical application of earthquake rescue, multi-sensor fusion technology shows remarkable application value and practical significance. From the point of view of improving rescue efficiency, this technology can quickly locate trapped people and gain prime time for rescue operations, thus effectively improving the survival probability of trapped people. At the level of personnel safety guarantee, the use of multi-sensor system carried by rescue robot for vital sign detection can significantly reduce the exposure risk of rescue personnel in high-risk environment and form reliable safety protection barrier. In addition, multi-sensor fusion technology can provide multi-modal vital sign information to help rescuers comprehensively assess the physiological state of trapped individuals, to formulate more scientific and accurate rescue plans and improve the overall success rate and implementation quality of rescue operations.

In addition, multi-sensor fusion technology shows great potential and application space in the field of earthquake rescue. With the continuous iteration and innovation of technology, the detection performance of sensors can be improved by optimizing design in the future, while reducing the size and power consumption of devices. This will enable rescue robots to integrate more types of sensors to achieve multi-dimensional, full-coverage vital sign detection. At the algorithm level, through the fusion of artificial intelligence, big data analysis and other cutting-edge technologies, the fusion algorithm is deeply optimized, which is expected to significantly improve the accuracy and real-time performance of detection results [24]. In addition, the technology has excellent scene adaptability and portability, which can be extended to various disaster rescue scenarios such as fire, flood and debris flow, providing more efficient and reliable technical solutions for disaster rescue, thus promoting the innovative development of the entire disaster rescue technology system.

6. Conclusion

This study focuses on the vital sign’s detection technology of rescue robot after earthquake disaster, aiming to improve its detection accuracy and efficiency in complex environment. By analyzing the shortcomings of traditional rescue methods and existing technologies, such as sensor cooperative interference, algorithm robustness, poor adaptability to complex environment, system compatibility and scalability defects, the necessity of adopting multi-sensor fusion technology is clarified, and its technical principle, heterogeneous sensor cooperative mechanism, data fusion process and algorithm realization path are described in detail. The research results show that this technology significantly improves the accuracy and positioning efficiency of vital signs detection in complex environments by integrating radar, infrared imaging, acoustic signals and other multi-source sensor data.

Although the phased achievements have been made, there is still room for improvement in real-time performance of the system, mainly reflected in the high computational complexity of some fusion algorithms. In the future, it can be verified by building a simulation experiment platform closer to the real earthquake rescue environment and combining the algorithm structure reconstruction and lightweight design to improve the system response speed and practicality.

Looking forward to the future, rescue robot vital signs detection technology will develop in the direction of intelligence, precision and diversification. The introduction of artificial intelligence algorithms is expected to optimize the feature extraction process, while the application of new sensors such as biological or nano sensors will enhance detection sensitivity and parameter acquisition capabilities. In addition, combined with cutting-edge technologies such as 5G and Internet of Things, an efficient and coordinated intelligent collaborative rescue network can be built to comprehensively improve disaster response capability.

References

[1]. Deng Nan. Life·Disaster·Reflection [D]. Northwestern University, 2021.

[2]. Zhao Zhiqiang. Conceptual design of earthquake rescue robot based on requirement and function evolutionary integration [D]. China University of Mining and Technology, 2023.

[3]. He Lehua, Xie Guangzhen, Liu Kexiang, et al. Fall detection based on YOLOv5 [J]. Journal of Jilin University (Information Science Edition), 2024, 42 (02): 378-386.

[4]. Wang Lun. Numerical simulation of pneumatic conveying of large granular materials for earthquake rescue [D]. Xiamen Institute of Technology, 2023.

[5]. Li Jiaqi. Study on the method of non-contact vital sign status recognition [D]. South China University of Technology, 2022.

[6]. Yin Wenfeng. Research on data processing and intelligent analysis methods in mobile medicine [D]. Beijing University of Posts and Telecommunications, 2019.

[7]. Xing Mengzhe. Research on passive deformation disaster information acquisition robot system for earthquake rescue [D]. Hebei University of Technology, 2015.

[8]. Limin C, Mingyu C, Shizhe X, et al.Analysis of Interference between Multiple Kinect Sensors and a Noise Reduction Method for Mobile Robots [J]. Journal of Convergence Information Technology, 2012, 7(21): 27-35.

[9]. Pan Binbin. A Survey of Algorithms for Mult objective Path Planning [J]. Journal of Chongqing Industrial and Commercial University (Natural Science Edition), 2012, 29 (05): 78-84.

[10]. Li Yixuan, Cha Yunfei. Application and development trend of common environment sensing sensors in intelligent driving field [J]. Automotive Abstracts, 2025, (04): 12-22.

[11]. Zhou Wanru. Research on Needle Tracking Based on Multisensory Fusion [D]. Xi'an University of Technology, 2023.

[12]. Bai Yingjie. Research on vital signs detection technology based on radar perception [D]. North China University of Science and Technology, 2023

[13]. Liu Xuyang, Cui Hengrong, Liu Zhao, et al. Detection of Human Body Signs Based on Doppler Radar Sensor [J]. Sensors and Microsystems, 2020, 39 (05): 137-139.

[14]. YANG Bu-bin. Study on Doppler shift estimation in MSK-LFM radar communication integration [D]. Xidian University, 2021.

[15]. Wang Yan, Du Yong, Wu Jun, et al. Integrated design of a new infrared imaging temperature sensor [J]. Instrumentation and Analytical Monitoring, 2017, (01): 8-11.

[16]. Ma Guoqiang. Research on image quality enhancement algorithm for infrared thermal imaging [D]. Zhengzhou University of Light Industry, 2024.

[17]. Chen Chengyuan. Study on acoustic signal acquisition and location technology based on fiber laser sensor array [D]. Heilongjiang University, 2021.

[18]. Zhang Xudong, Zhan Yi, Ma Yongqin. Comparison of wavelet transform, and Fourier transform in seismic data denoising [J]. Inner Mongolia Petrochemical, 2007, (07): 29-32+44.

[19]. Ju Huihui, Liu Zhigang, Jiang Jiangjun, et al. Removal of hyperspectral banding noise based on low pass filtered residual map [J]. Acta Optica Sinica, 2018, 38 (12): 361-369.

[20]. Zhang Lei. Research on vital signs detection algorithm based on FM CW radar [D]. Xiamen Institute of Technology, 2022.

[21]. Wang, M., Lian, Z., Núñez-Andrés, M. A., Wang, P., Tian, Y., Yue, Z., & Gu, L. (2024). Robot localization method based on multi-sensor fusion in low-light environment. Electronics, 13(22), 4346.

[22]. Lei W, Xunyan J, Weihua Z, et al.Bayesian decision based fusion algorithm for remote sensing images [J]. Scientific Reports, 2024, 14(1): 11558-11558.

[23]. Chen Xianchang. Deep learning algorithms and applications based on convolutional neural networks [D]. Zhejiang University of Industry and Commerce, 2014.

[24]. Sun Hua, Chen Junfeng, Wu Lin. Multisensory information fusion technology and its application in robot [J]. Sensor Technology, 2003, (09): 1-4.

Cite this article

Du,H. (2025). Research on Vital Sign Detection Technology for Rescue Robots after Earthquakes. Theoretical and Natural Science,120,8-18.

Data availability

The datasets used and/or analyzed during the current study will be available from the authors upon reasonable request.

Disclaimer/Publisher's Note

The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of EWA Publishing and/or the editor(s). EWA Publishing and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content.

About volume

Volume title: Proceedings of CONF-APMM 2025 Symposium: Controlling Robotic Manipulator Using PWM Signals with Microcontrollers

© 2024 by the author(s). Licensee EWA Publishing, Oxford, UK. This article is an open access article distributed under the terms and

conditions of the Creative Commons Attribution (CC BY) license. Authors who

publish this series agree to the following terms:

1. Authors retain copyright and grant the series right of first publication with the work simultaneously licensed under a Creative Commons

Attribution License that allows others to share the work with an acknowledgment of the work's authorship and initial publication in this

series.

2. Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the series's published

version of the work (e.g., post it to an institutional repository or publish it in a book), with an acknowledgment of its initial

publication in this series.

3. Authors are permitted and encouraged to post their work online (e.g., in institutional repositories or on their website) prior to and

during the submission process, as it can lead to productive exchanges, as well as earlier and greater citation of published work (See

Open access policy for details).

References

[1]. Deng Nan. Life·Disaster·Reflection [D]. Northwestern University, 2021.

[2]. Zhao Zhiqiang. Conceptual design of earthquake rescue robot based on requirement and function evolutionary integration [D]. China University of Mining and Technology, 2023.

[3]. He Lehua, Xie Guangzhen, Liu Kexiang, et al. Fall detection based on YOLOv5 [J]. Journal of Jilin University (Information Science Edition), 2024, 42 (02): 378-386.

[4]. Wang Lun. Numerical simulation of pneumatic conveying of large granular materials for earthquake rescue [D]. Xiamen Institute of Technology, 2023.

[5]. Li Jiaqi. Study on the method of non-contact vital sign status recognition [D]. South China University of Technology, 2022.

[6]. Yin Wenfeng. Research on data processing and intelligent analysis methods in mobile medicine [D]. Beijing University of Posts and Telecommunications, 2019.

[7]. Xing Mengzhe. Research on passive deformation disaster information acquisition robot system for earthquake rescue [D]. Hebei University of Technology, 2015.

[8]. Limin C, Mingyu C, Shizhe X, et al.Analysis of Interference between Multiple Kinect Sensors and a Noise Reduction Method for Mobile Robots [J]. Journal of Convergence Information Technology, 2012, 7(21): 27-35.

[9]. Pan Binbin. A Survey of Algorithms for Mult objective Path Planning [J]. Journal of Chongqing Industrial and Commercial University (Natural Science Edition), 2012, 29 (05): 78-84.

[10]. Li Yixuan, Cha Yunfei. Application and development trend of common environment sensing sensors in intelligent driving field [J]. Automotive Abstracts, 2025, (04): 12-22.

[11]. Zhou Wanru. Research on Needle Tracking Based on Multisensory Fusion [D]. Xi'an University of Technology, 2023.

[12]. Bai Yingjie. Research on vital signs detection technology based on radar perception [D]. North China University of Science and Technology, 2023

[13]. Liu Xuyang, Cui Hengrong, Liu Zhao, et al. Detection of Human Body Signs Based on Doppler Radar Sensor [J]. Sensors and Microsystems, 2020, 39 (05): 137-139.

[14]. YANG Bu-bin. Study on Doppler shift estimation in MSK-LFM radar communication integration [D]. Xidian University, 2021.

[15]. Wang Yan, Du Yong, Wu Jun, et al. Integrated design of a new infrared imaging temperature sensor [J]. Instrumentation and Analytical Monitoring, 2017, (01): 8-11.

[16]. Ma Guoqiang. Research on image quality enhancement algorithm for infrared thermal imaging [D]. Zhengzhou University of Light Industry, 2024.

[17]. Chen Chengyuan. Study on acoustic signal acquisition and location technology based on fiber laser sensor array [D]. Heilongjiang University, 2021.

[18]. Zhang Xudong, Zhan Yi, Ma Yongqin. Comparison of wavelet transform, and Fourier transform in seismic data denoising [J]. Inner Mongolia Petrochemical, 2007, (07): 29-32+44.

[19]. Ju Huihui, Liu Zhigang, Jiang Jiangjun, et al. Removal of hyperspectral banding noise based on low pass filtered residual map [J]. Acta Optica Sinica, 2018, 38 (12): 361-369.

[20]. Zhang Lei. Research on vital signs detection algorithm based on FM CW radar [D]. Xiamen Institute of Technology, 2022.

[21]. Wang, M., Lian, Z., Núñez-Andrés, M. A., Wang, P., Tian, Y., Yue, Z., & Gu, L. (2024). Robot localization method based on multi-sensor fusion in low-light environment. Electronics, 13(22), 4346.

[22]. Lei W, Xunyan J, Weihua Z, et al.Bayesian decision based fusion algorithm for remote sensing images [J]. Scientific Reports, 2024, 14(1): 11558-11558.

[23]. Chen Xianchang. Deep learning algorithms and applications based on convolutional neural networks [D]. Zhejiang University of Industry and Commerce, 2014.

[24]. Sun Hua, Chen Junfeng, Wu Lin. Multisensory information fusion technology and its application in robot [J]. Sensor Technology, 2003, (09): 1-4.